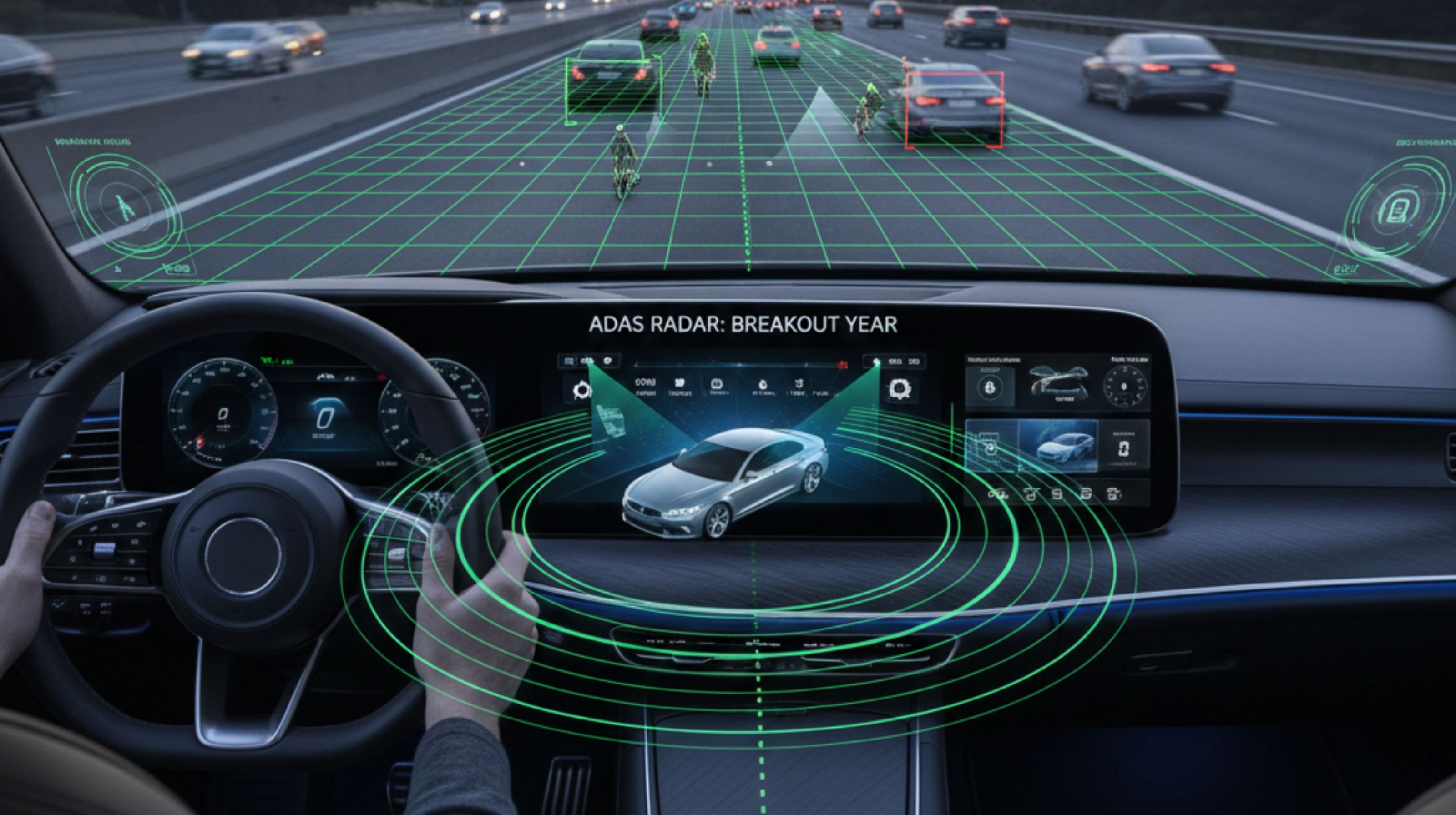

The Breakout Year for Radar-Based ADAS: Driving Advanced Vehicle Safety and Autonomy

The automotive industry is buzzing, and for good reason: 2024 is unequivocally proving to be the breakout year for radar based ADAS (Advanced Driver-Assistance Systems). After years of steady development and cautious integration, the technology is hitting an inflection point, driven by a convergence of technological advancements, evolving regulatory pressures, and a clearer understanding of its indispensable role in the sensor fusion stack.

What’s starting to matter now is the practical reality of autonomous and semi-autonomous driving. Early narratives often focused on other sensor modalities, but the latest shift most people missed is the quiet but profound strengthening of radar’s capabilities. This isn't just about incremental improvements; it's about a fundamental re-evaluation of radar’s centrality to robust perception systems. Neglecting this shift means risking significant gaps in vehicle safety and performance, especially in challenging environmental conditions where other sensors falter.

From working with real businesses across the automotive supply chain, it’s clear that manufacturers are no longer debating radar's necessity. Instead, the focus has shifted to optimizing its integration and maximizing its potential for precise object detection, speed measurement, and trajectory prediction, irrespective of weather or lighting. The push for higher levels of driving automation demands a level of environmental robustness that only radar can consistently provide, making this current surge not just an upgrade, but a foundational step.

Here’s what’s changed recently: advances in imaging radar and higher resolution capabilities are allowing these systems to move beyond simple distance and velocity. They are now capable of generating rich point cloud data, rivaling some lidar applications in certain contexts, but with radar's inherent advantages in adverse conditions. This expansion of capabilities is what truly defines the radar based ADAS breakout year, expanding its utility far beyond its traditional roles.

Understanding the Breakthrough in Radar-Based ADAS

Radar-based ADAS refers to advanced driver-assistance systems that utilize radar sensors to detect objects, measure their speed and distance, and often their angle relative to the vehicle. These systems operate by emitting radio waves and analyzing the reflections, allowing them to "see" through fog, heavy rain, snow, and darkness, conditions where cameras struggle and lidar can be compromised. This robust performance in challenging environments is a cornerstone of why radar is experiencing such a pivotal moment.

The recent breakthrough isn't just about the sensors themselves, but also the sophisticated processing algorithms and software stacks that interpret their data. Modern radar units, particularly "imaging radar," offer significantly higher resolution, enabling better object classification—distinguishing between a car, a pedestrian, or a motorcycle, for instance—and more precise mapping of the vehicle's surroundings. This leap in fidelity transforms radar from a supplementary sensor into a primary one, underpinning critical safety functions across various driving scenarios.

This increased resolution and data richness allow radar to contribute more meaningfully to the vehicle's perception system, enhancing functions like adaptive cruise control, automatic emergency braking, blind-spot monitoring, and lane-change assist. What used to be a somewhat coarse instrument for detection is now a nuanced component capable of painting a detailed picture of the immediate road environment, even when visibility is near zero. This fundamental improvement in data quality and interpretability is a key driver for the current market acceleration.

Why 2024 Marks a Pivotal Year for Radar

Several converging factors have coalesced to make 2024 the undeniable radar based ADAS breakout year. Firstly, the maturity of regulatory frameworks worldwide, particularly in Europe and North America, is pushing for higher standards of active safety features. These mandates effectively require the kind of reliable, all-weather performance that radar uniquely offers, forcing manufacturers to deepen their reliance on this technology rather than just integrating it as an option.

Secondly, the drive towards higher levels of autonomous driving, even if still far from full Level 5, necessitates redundancy and diversity in sensor modalities. Relying solely on cameras or lidar leaves critical gaps in adverse conditions. Radar provides that crucial layer of robustness, acting as a reliable anchor for the perception stack. After analyzing recent patterns, it's clear that the industry has learned valuable lessons from initial deployments, understanding that safety-critical systems cannot afford environmental fragility.

Finally, cost-effectiveness and miniaturization have played a significant role. The manufacturing processes for radar sensors have become more efficient, bringing down unit costs and making their widespread adoption more economically viable. Simultaneously, smaller form factors allow for more discreet and integrated placement within vehicle designs, moving beyond the bulky early prototypes to sleek, production-ready implementations. This combination of regulatory pull, technological push, and economic viability has created the perfect storm for radar's ascendancy.

Radar vs. Other Sensor Modalities: A Crucial Distinction

While often compared to cameras and lidar, radar possesses unique advantages that make it an indispensable part of any robust ADAS or autonomous driving system. A common misconception is that all sensors are interchangeable, but each serves a distinct, complementary purpose. Cameras excel at object classification, traffic light detection, and lane marking recognition in good lighting. Lidar, with its highly detailed 3D point clouds, provides unparalleled spatial mapping and object shape identification in clear conditions.

However, both cameras and lidar face significant limitations in adverse weather conditions. Heavy rain, fog, snow, or direct sunlight can severely degrade camera performance, leading to blinding or misinterpretations. Lidar, while robust in some ways, can also be affected by precipitation, dust, and reflective surfaces, which can scatter its laser beams. This is where radar shines, offering unparalleled resilience. Its radio waves penetrate these environmental challenges, providing consistent and reliable data on distance and velocity, regardless of visibility.

The contrarian insight often overlooked is that while cameras and lidar capture the "what" and "where" in ideal scenarios, radar consistently delivers the "how fast" and "how far"—the fundamental physics of motion—in *every* scenario. This isn't just an advantage; it’s a non-negotiable requirement for safety-critical functions like automatic emergency braking (AEB), where milliseconds matter and environmental interference cannot be tolerated. The true power lies in sensor fusion, where radar’s raw, robust data underpins and validates the outputs of other sensors, creating a comprehensive and trustworthy environmental model.

Real-World Applications and Use Cases

The expansion of radar's capabilities is evident across a growing suite of ADAS features that benefit directly from its reliable detection. Beyond the familiar Adaptive Cruise Control (ACC) and Blind Spot Monitoring (BSM), modern radar units are enabling more sophisticated functionalities.

- Automatic Emergency Braking (AEB) and Forward Collision Warning (FCW): These are perhaps the most critical applications, relying on radar's ability to precisely measure the distance and closing speed to obstacles ahead, initiating warnings or braking actions far quicker and more reliably than human reaction.

- Rear Cross-Traffic Alert (RCTA) and Lane Change Assist (LCA): Radar sensors mounted at the rear of the vehicle detect approaching vehicles when backing out of parking spots or attempting lane changes, providing warnings even when the driver's view is obstructed.

- Parking Assist Systems: High-resolution radar can assist in identifying parking spaces and guiding vehicles into them, complementing ultrasonic sensors with better range and environmental robustness.

- Highway Driving Assist: For Level 2 and Level 2+ systems, radar provides the backbone for maintaining safe following distances, executing lane keeping, and facilitating automated highway maneuvers by constantly monitoring surrounding traffic.

- Vulnerable Road User (VRU) Detection: While challenging, advanced imaging radar is increasingly capable of detecting pedestrians and cyclists, even in low light or adverse weather, significantly enhancing urban safety.

Based on how platforms behave today, radar’s role is expanding from simple detection to contributing to complex decision-making processes, especially in dense traffic or poor visibility. This operational expansion highlights its maturation from a niche sensor to a central pillar of automotive perception.

Common Misconceptions and Deployment Challenges

Despite its accelerating adoption, radar technology in ADAS still faces misconceptions and deployment challenges that are often overlooked in the hype cycle. One major misconception is that radar is only effective for long-range detection and lacks the granularity for near-field applications or precise object classification. While this was true for older generations, modern imaging radar is changing that narrative, offering increasingly fine resolution and the ability to differentiate between multiple objects in close proximity.

A significant deployment challenge lies in the sheer volume and complexity of data generated by advanced radar systems. Interpreting this raw data, filtering out noise, and fusing it effectively with camera and lidar inputs requires sophisticated edge computing capabilities and robust software algorithms. Many blogs skip the practical takeaway that it's not enough to simply have advanced sensors; the ability to process and make sense of their combined output in real-time is the true bottleneck and a major area of ongoing development for OEMs and Tier 1 suppliers.

Another challenge is calibration and integration. Ensuring that radar sensors are precisely aligned and their data accurately correlated with other vehicle systems and mapping data is crucial for reliable performance. Slight misalignments can lead to erroneous detections or missed objects, highlighting the need for rigorous manufacturing and maintenance protocols. Over-explaining sensor capabilities without addressing these practical integration hurdles leaves a critical gap in understanding.

Best Practices for Leveraging Radar-Based ADAS

To maximize the benefits of radar-based ADAS and truly capitalize on its breakout year, manufacturers and integrators are adopting several best practices that go beyond simply installing the sensors.

- Prioritize Sensor Fusion: Do not treat radar as an isolated component. Its true power is unlocked when its data is seamlessly fused with inputs from cameras, lidar, and ultrasonic sensors. This redundancy and diversity create a comprehensive, robust, and validated environmental model, mitigating the weaknesses of any single sensor.

- Invest in Advanced Perception Algorithms: The quality of the software that processes and interprets radar data is as crucial as the sensor hardware itself. Investing in AI-driven algorithms for object classification, trajectory prediction, and scene understanding ensures that the rich data from modern radar is fully utilized.

- Focus on Environmental Robustness: While radar naturally excels here, testing and validation should rigorously challenge the entire ADAS system in extreme weather conditions. This builds confidence in the system’s ability to perform reliably when human drivers are most vulnerable.

- Standardize Communication Protocols: Ensuring seamless communication and data exchange between radar units and the central vehicle control unit is vital. Adopting standardized automotive protocols helps simplify integration and improves system reliability.

- Continuous OTA Updates: As radar technology and algorithms evolve, the ability to deploy over-the-air (OTA) software updates ensures that vehicles on the road can continuously benefit from improvements in perception and safety features without requiring physical recalls.

These practices underscore that the "breakout" is not just about the hardware, but a holistic approach to system design and continuous improvement, ensuring radar's foundational contribution to the safety and autonomy mission.

The Road Ahead: What’s Next for Radar in ADAS

Looking ahead, the momentum behind radar-based ADAS is only set to intensify in the coming 30-90 days and beyond. We will see continued advancements in imaging radar technology, pushing resolutions even higher and enabling richer 4D point cloud data that will further blur the lines between radar and lidar in terms of object detailing. This will unlock even more sophisticated ADAS functions, particularly in complex urban environments.

The push for Level 3 (conditional automation) and even early Level 4 systems will cement radar’s status as a non-negotiable core sensor. As vehicles assume more responsibility for driving tasks, the absolute requirement for reliable, all-weather perception will drive further investment and innovation in radar. Expect to see greater integration with vehicle-to-everything (V2X) communication systems, allowing radar data to be shared and enhanced by network-level insights.

This radar based ADAS breakout year isn't a peak, but a new baseline. The trajectory indicates ongoing evolution in sensor fusion strategies, more intelligent data processing at the edge, and an increasing reliance on radar as the foundational layer of safety. The future of driver assistance and autonomous mobility hinges on robust perception, and radar has proven its enduring, critical role in achieving that vision, ensuring safer roads for everyone, regardless of what the weather throws at us.

Comments

Post a Comment